Out, damned spot

How to use ImageMagick to reduce OCR errors from redaction

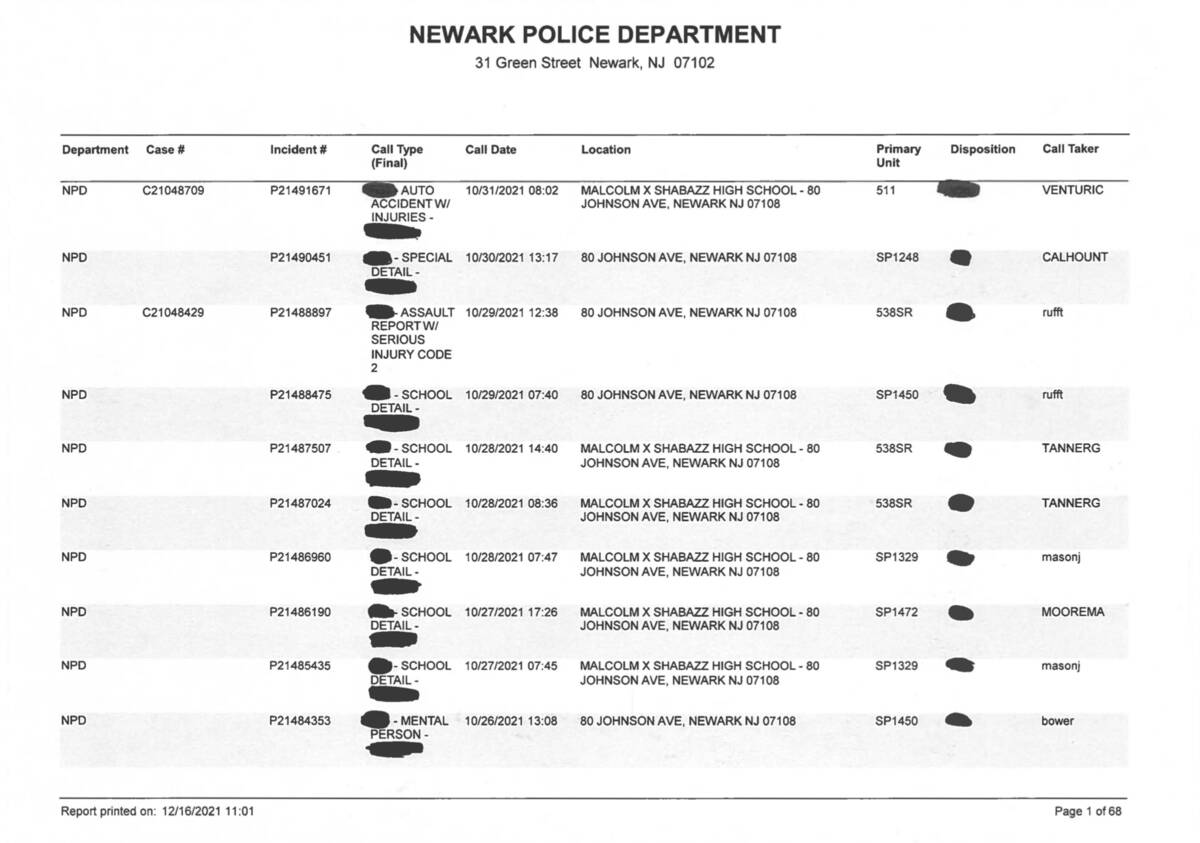

While reporting on New Jersey’s Malcolm X Shabazz High School, Chalkbeat reporter Patrick Wall sent the Newark Police Department a FOIA to obtain data on police calls. The police sent back the PDF shown at the top of this post.

My first instinct was to use an extraction tool like Tabula or PDFPlumber to reformat the PDF’s text into .csv format, so that we can analyze it and the reporter can explore it.

Unfortunately, there are two problems with that response: first, it’s a scan of a redacted printout, so there’s no actual text for us to read. Second, the redactions make OCR (Optical Character Recognition) unreliable.

OCR tools sometimes try to interpret redactions — especially messy, hand-made ones — as characters. This can introduce randomness into the results, scramble extraction tools, and prevent you from parsing the text successfully.

If you’ve tried to solve this in the past, it’s tempting to try to clean it up on the data side. But instead, we saved some time by altering the images before they were fed to the OCR tool. Here’s how it works.

Exploring the problems

Initially, I attempted to convert the images to text using Tesseract. The results were functional, but the redactions caused increasingly random character interpretations — like the strings of @MB- and Pees — which made the text difficult to analyze or parse in part two.

NPD 19153832 @MB- SCHOOL 04/10/2019 14:35 + MALCOLM X SHABAZZ HIGH SCHOOL - 80 s33skR SANTANGEL

DETAIL - JOHNSON AVE, NEWARK NJ 07108 OAN

ez»

NPD P19153365 04/10/2019 08:53 MALCOLM X SHABAZZ HIGH SCHOOL - 80 o& moraesl

Pees uane JOHNSON AVE, NEWARK NJ 07108

OUS POLICE

TASK - CODE

1

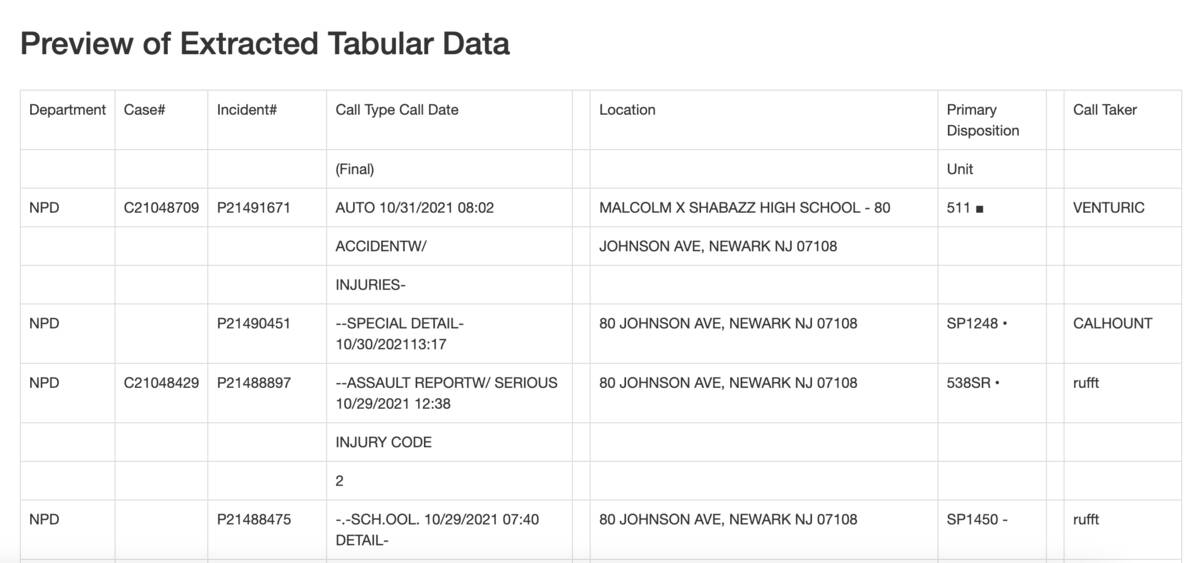

I ran it through a few other tools, including Document Cloud’s built-in OCR function and plain old Adobe Acrobat.

No matter what tool I used, the text still came out inaccurate. That’s also a problem for extraction, because it makes parsing in Python or JavaScript more difficult. And the Tabula output looked like a mess, with junk columns, random HTML characters, and inconsistent layouts from page to page:

If we didn’t need the call type field, we could have extracted the other columns and combined the data more or less easily. But the call type was one of the main fields of interest, so we couldn’t afford to throw it away.

The solutions

What if, instead of trying to OCR or parse around the blobs, we could remove them entirely? Here’s where ImageMagick came in. By using it to create a version of each page that only has the blobs on it, and then subtracting that from the original, we ended up with just the text, which would OCR cleanly.

Our solution is ideal for files that are not machine-generated and do not contain machine-readable content — meaning that someone has printed something out then scanned it, so it contains no text characters.

If you’re not sure whether that describes your PDF, you can test this by trying to highlight a line of text. If you can highlight, copy, and paste text from your PDF, there are probably easier ways to solve this problem. But if trying to highlight text just selects the entire page, this technique might be for you.

It’s possible to do this as a single processing step, but that can be hard to debug. Instead, we built a chain of operations in a shell script, each of which outputs to a new numbered directory. First, we created the images of each individual page using the pdftocairo utility:

mkdir -p original

pdftocairo -jpeg original.pdf original/page

Next, we used the dilate and erode filters to remove the text from the page. These filters expand and contract any light areas in an image, respectively. Our text was pretty thin, so a 2-pixel dilate removed it entirely, while leaving the thick redaction marks a little smaller but mostly still present. A 3-pixel erode then restored the size of the redactions to roughly their original size. Since the erode can’t restore text that’s been dilated to nothing, we were left with just the blobs.

mkdir -p 2_eroded

cd original

for f in *.jpg; do

convert $f -morphology Dilate Octagon:2 -morphology Erode Octagon:3 ../2_eroded/$f

done

cd ..

Next we took the isolated blobs and subtracted them from the original image. There’s a little fringing left, where the dilate/erode combo smoothed out the jagged edges of the redactions, but it was faint enough (and random enough) that OCR treated it as noise and ignored it.

mkdir -p 3_subtracted

cd original

for f in *.jpg; do

composite -compose Minus ../2_eroded/$f $f ../3_subtracted/$f

done

cd ..

Finally, the subtraction created a negative image, meaning that it was now white text on black. So the last step applied a negate filter to flip it back around.

mkdir -p 4_reverted

cd 3_subtracted

for f in *.jpg; do

convert $f -negate ../4_reverted/$f

done

This allowed me to remove the blobs. The output after all steps were completed looked something like this, almost like we took whiteout to the redaction marker:

You can do the same process in a one-line ImageMagick command, but it’s a lot harder to follow:

set -x

set -e

mkdir -p 5_oneliner

cd original

for f in *.jpg; do

convert \

$f \( $f -morphology Dilate Octagon:2 -morphology Erode Octagon:3 \) \

-compose Minus -composite -negate ../5_oneliner/$f

done

cd ..

Wrapping it up

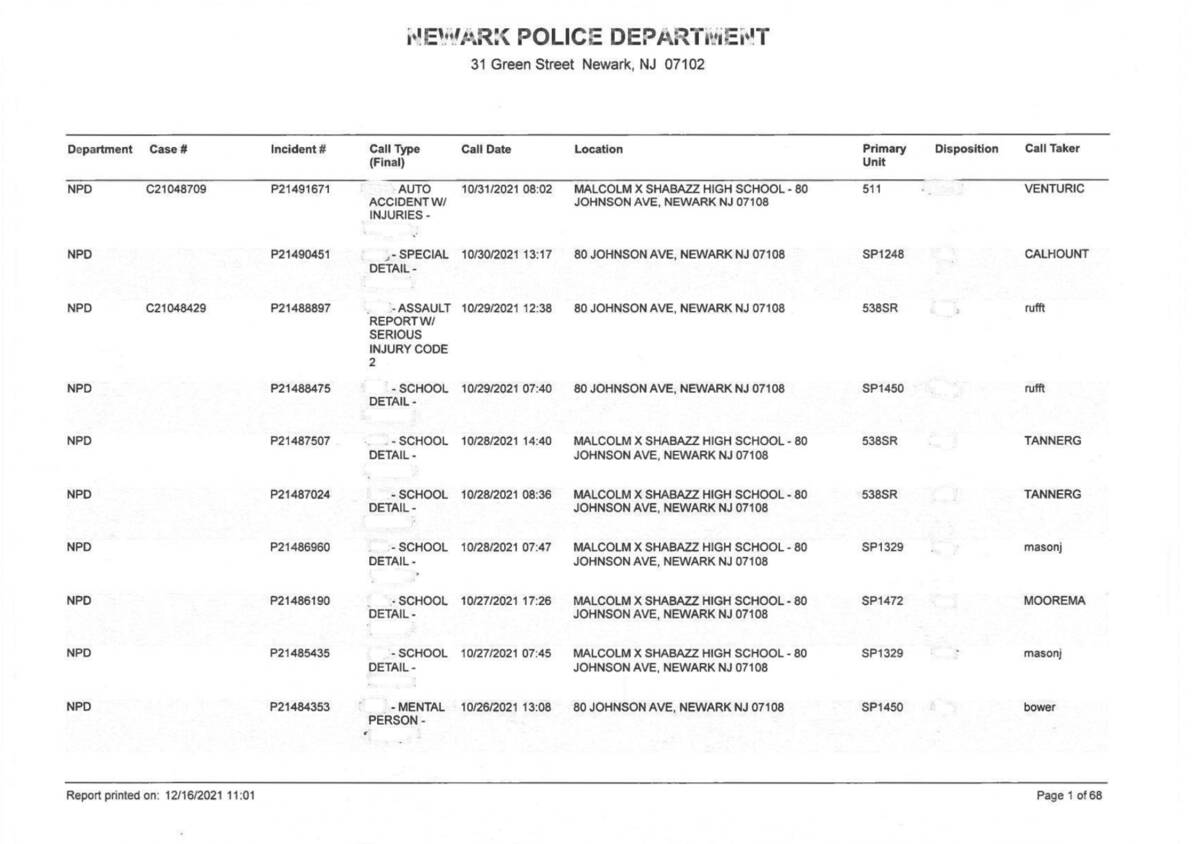

Removing the blobs vastly improved the output of every OCR tool, Tesseract included.

I tested Tesseract on the new images, first.

Here’s a look at the same chunk of text from earlier. The blobs are still present as = and », but the overall text is much clearer, and the structure is more reliable. Miscellaneous is interpreted correctly, instead of read partially as Pees due to the attached blob.

NPD P19153832 = SCHOOL 04/10/2019 14:35 | MALCOLM X SHABAZZ HIGH SCHOOL - 80 538SR SANTANGEL

DETAIL - JOHNSON AVE, NEWARK NJ 07108 OAN

NPD P19153365 » 04/10/2019 08:53 © MALCOLM X SHABAZZ HIGH SCHOOL - 80 moraesl

MISCELLANE JOHNSON AVE, NEWARK NJ 07108.

OUS POLICE

TASK - CODE

1

Unfortunately, even after that good scrub, the Tesseract text still contained a lot of random characters and errors. On other pages, the OCR dropped important structural logic cues that would make a parser more feasible — like the P at the beginning of each police report, which is an important cue.

So instead of relying on Tesseract, I reassembled the processed JPGs from ImageMagick, converted them back to PDF, OCR’d that PDF with ABBYY Finereader, and ran it back through Tabula again.

ABBYY was overall a bit more accurate than Tesseract, in this instance. If you’re investigating OCR tools more broadly, check out Open News’ comparison of a few popular tools. It’s from 2019, but many of the evaluations still stand.

Without the blobs, Tabula also worked better. It didn’t create extra columns and junk characters. Tabula still didn’t love the empty Case Number column, so I had to extract the columns in separate chunks. One extract looked just at the Incident Number with the Call Type description of interest; the other extracted the Case Number, Incident Number, and Call Date fields.

That gave me two datasets with reliable structural logic. After I combined them into a consistently structured single data set, all I needed was a bit of clever Excel logic.

Redactions like this don’t just remove information — they also limit journalists’ options for analyzing and reporting the contents of a records request. In some cases, they can kill a story and prevent information from reaching the public. So it’s important to develop a varied toolkit for working around limitations and recovering data.